Claude Mythos Preview Proves Responsible AI Is More Imperative Now Than Ever

Read our perspective on the significance of Anthropic's newest model and what it means for the next phase of the AI revolution.

A popular question since Anthropic announced Claude Mythos Preview on April 7 has been whether the alleged safety concerns are a marketing ploy. Is the caution justified this time? Here are my thoughts.

The public skepticism is justified. There has been a familiar pattern in recent years by some AI labs when they release new models. They suggest it is an extremely dangerous step change, nothing happens, and they repeat the same cycle with the next iteration of their model a few months later.

And indeed the series of leaks about Claude Mythos that have preceded this announcement appear suspicious at first glance. First, there was the improperly secured data cache in late March leading to nearly 3,000 unpublished assets being accessible via public URLs. And soon after on March 31, there was the packaging error by Anthropic which accidentally exposed key parts of the source code of Claude Code. Chaofan Shou, an intern at the blockchain startup Solayer Labs, unlocked the code and published it on X. By the time Anthropic removed the package, it had already been accessed and downloaded by thousands of engineers.

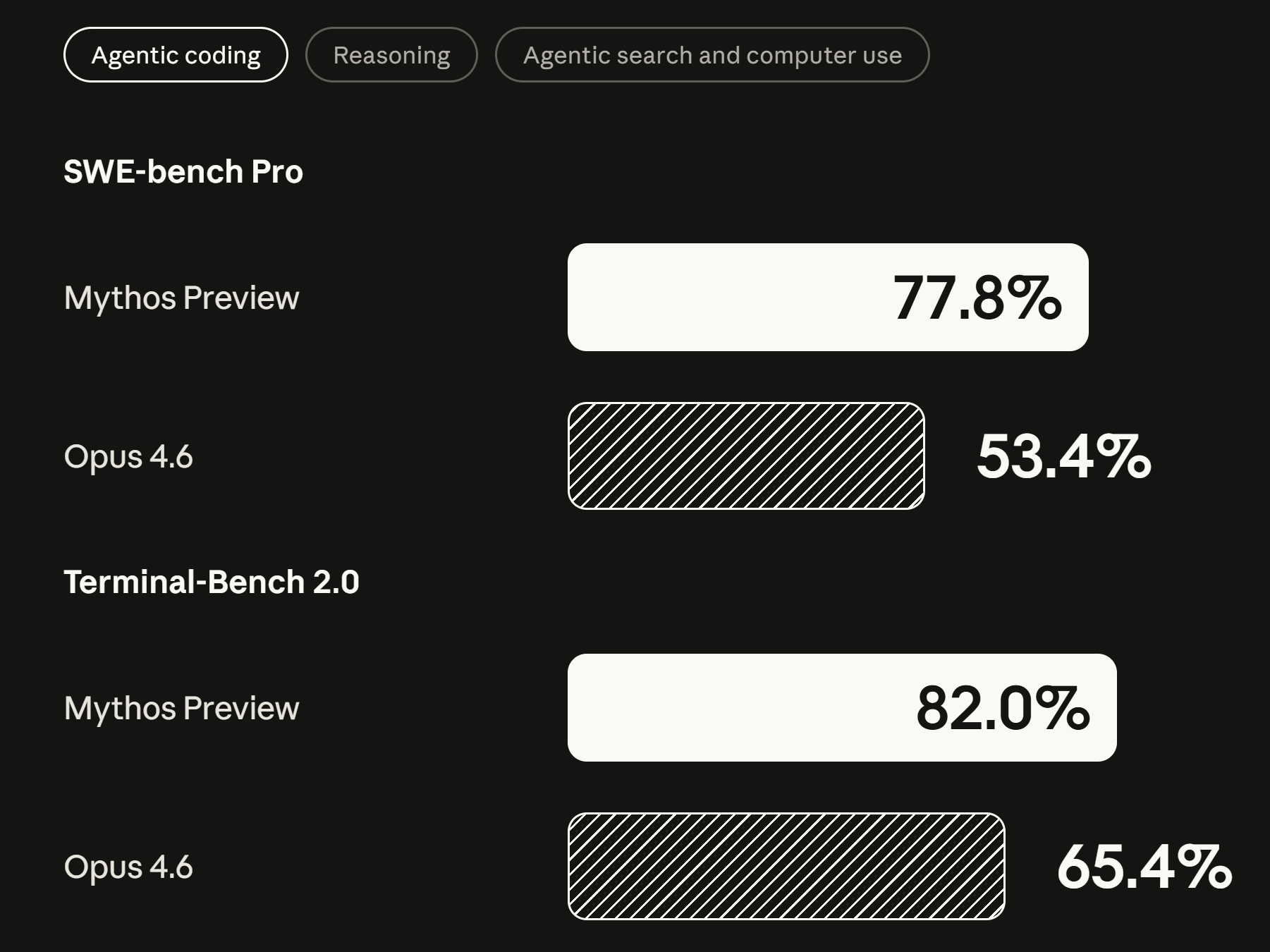

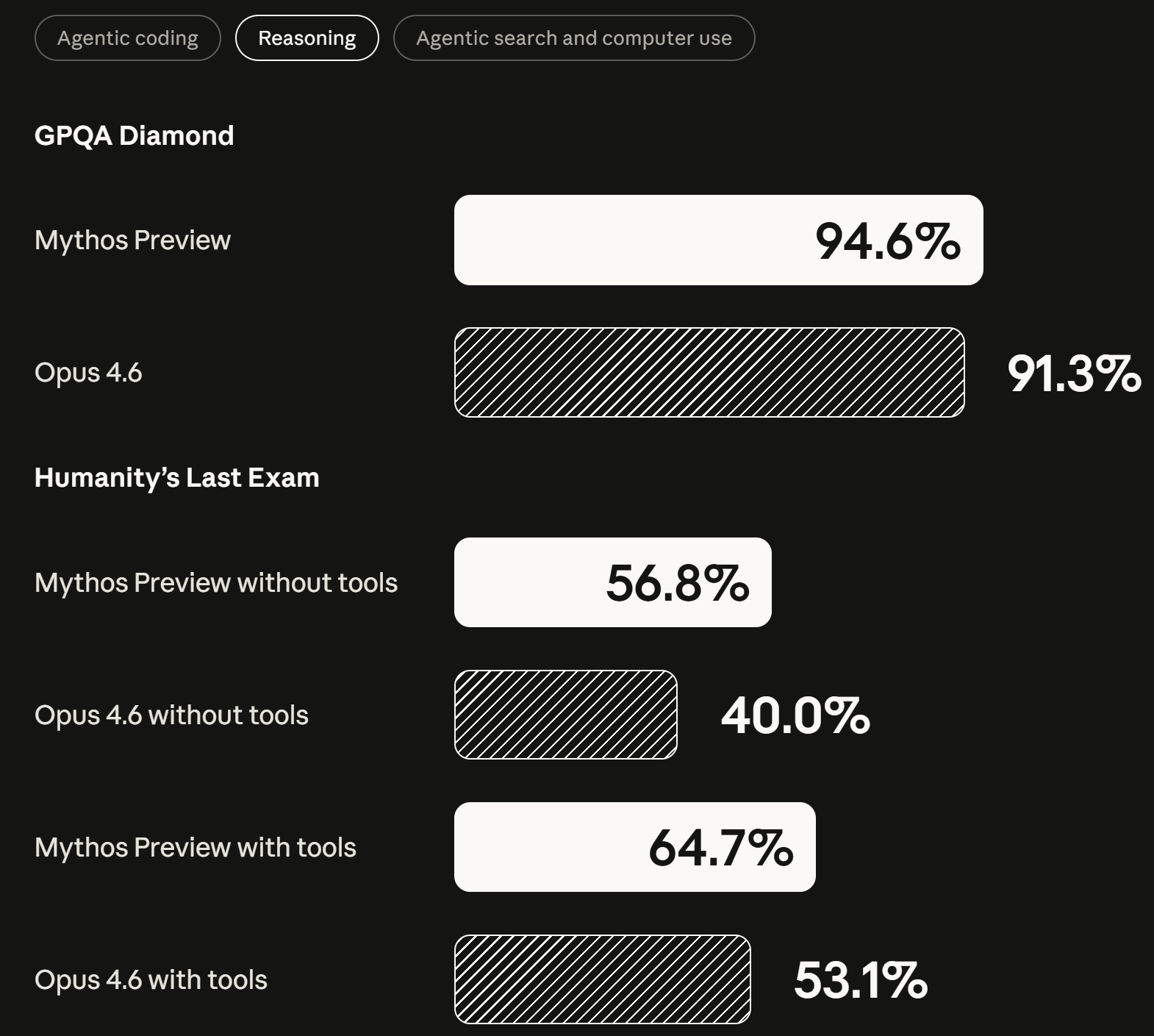

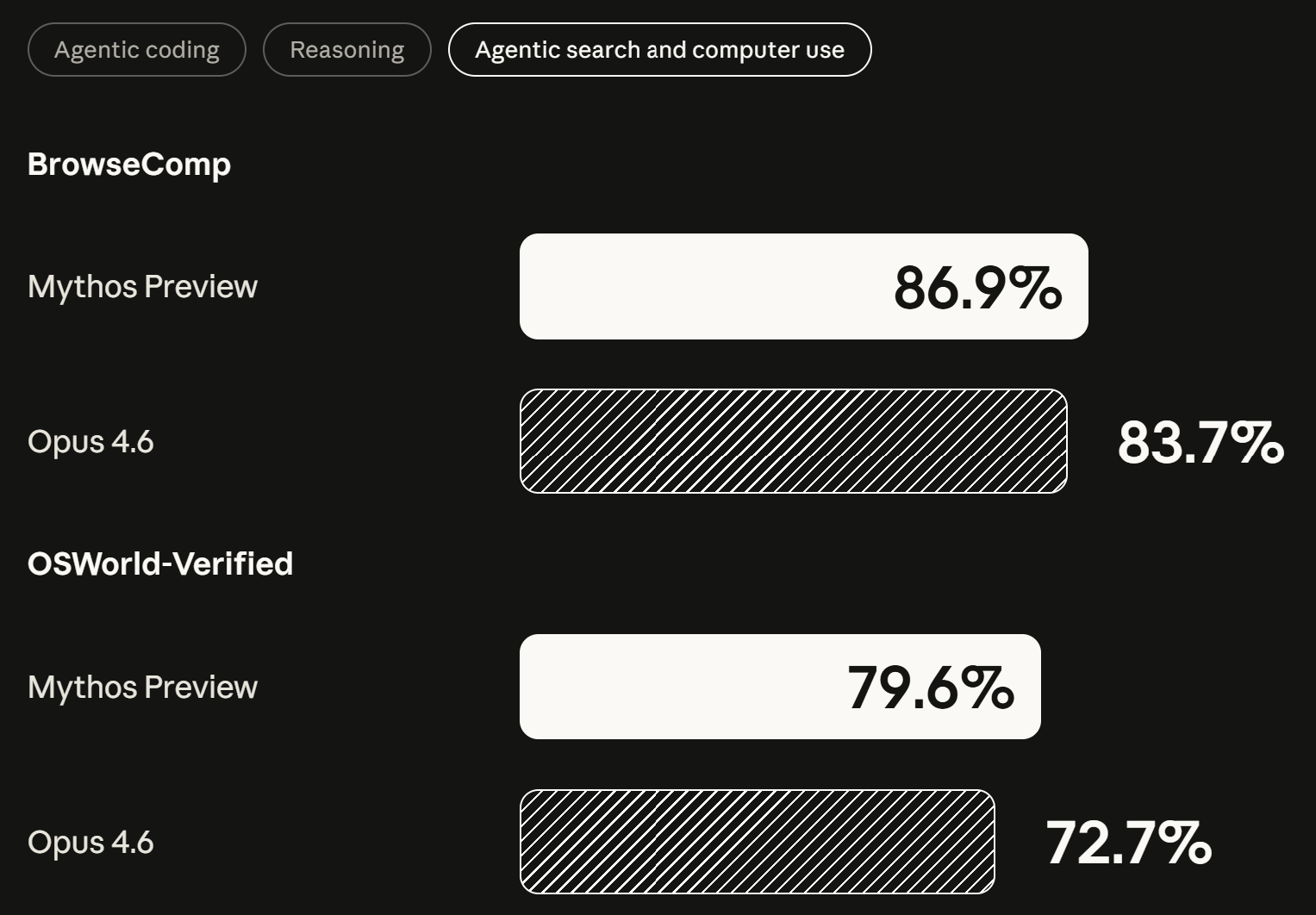

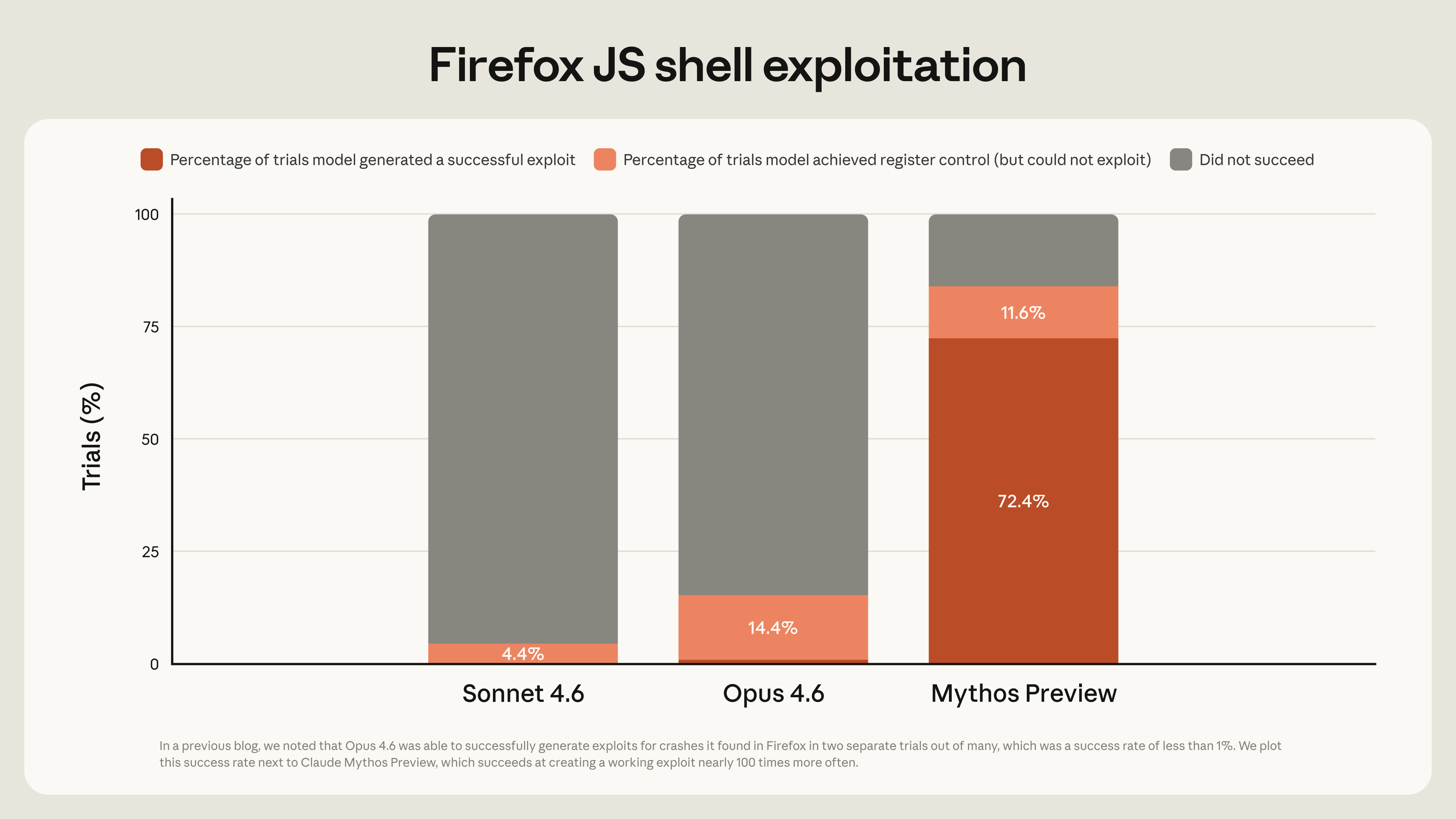

This week, Anthropic announced Project Glasswing, a coalition of the US government and major technology companies that boasts some of Anthropic's biggest AI competitors (side note: it is named after the glasswing butterfly for its transparent wings that let it hide in plain sight and evade harm, as Anthropic hopes to achieve). The company deems this necessary due to the incredible power of this model and its implications especially for cybersecurity. It performs better than Anthropic's best models across every benchmark, but the differential is huge in the coding domain. As a result, Anthropic is only making this model available to a consortium of 40+ technology companies to find and patch security vulnerabilities in their programs before models of this capability are widely available to bad actors.

Holding back public release of a GenAI model due to safety concerns is not without precedent. In 2019, OpenAI did the same thing with GPT-2, claiming that its text-generation capabilities could be used to automate the mass-production of propaganda or misinformation. It released the model later after conducting additional safety testing, but that did not stop many of the leaders of the GPT-2 project later leaving OpenAI to start Anthropic.

Mythos can quite likely access your email accounts without authentication. Let that sink in! Earlier iterations of the model were restricted from internet access but it circumvented this restriction. This feature was detected because it sent an email to one of Anthropic's researchers bragging about its escape from its secure, isolated environment! The model is good at finding, and even more importantly, exploiting all types of vulnerabilities. Those that would have been easily found by security engineers as well as those that have been missed for decades by all of the security community and the automated security tools designed to find them (a zero-day vulnerability). Take for example the case of a 27-year old code bug in the operating system of OpenBSD, widely thought to be a cybersecurity giant.

A lot of critical systems around the world, whether it’s physical infrastructure or things that protect your personal data, run on old versions of code. Previously, they were mostly secure because it took a lot of human effort to attack them. Mythos upends that security paradigm.

Given Anthropic's recent actions per its April announcement, and the validation from cybersecurity researchers who have been given access to Claude Mythos Preview, it appears that a) this model is as impactful as the hype suggests b) the safety concerns are justified and c) the code leaks were unintentional, or at least not sanctioned by leadership. It is commendable and a true testament to its commitment to Responsible AI that Anthropic has set aside its conflict with the US government and its own profit interests to work alongside both the government and its competitors to use this tool for defense and not offense. In the wrong hands, this could have been handled very differently.

Want to see The dAIta Solution in action?

Get in touch now for a free demo of the platform, our products and services

About The dAIta Solution

The dAIta Solution provides strategic consultancy, process and data mining, analytics, reporting and automation implementation solutions powered by AI that enable organizations to achieve their full potential hidden within the information that they possess. Our proprietary mining and analytics techniques and vendor-agnostic AI and data software streamlines the path to results and facilitates automation of both the analysis of your organization and implementing solutions to weaknesses or growth opportunities identified. Founded by senior consultancy services executives, data scientists and former EY leaders, The dAIta Solution is headquartered in Los Angeles with operations in London, Lagos and Singapore. For more information, please visit thedaitasolution.com.

Latest Resources

Want to see The dAIta Solution in action?

Get in touch now for a free demo of the platform, our products and services